At their fingertips: Helping surgeons gain better control of robotic hands

Steady hands and uninterrupted, sharp vision are extremely critical when performing surgery on delicate structures, like the brain or hair-thin blood vessels. While surgical cameras have tremendously improved what surgeons see during operative procedures, the “steady hand” remains to be enhanced — new surgical technologies, including sophisticated surgeon-guided robotic hands, cannot prevent accidental injuries when operating close to fragile tissue.

In a new study published in the January issue of the journal Scientific Reports, researchers at Texas A&M University show that by delivering small, yet perceptible buzzes of electrical currents to fingertips, users can be given an accurate perception of distance to contact. This insight enabled users to control their robotic fingers precisely enough to gently land on fragile surfaces.

The researchers said that this technique might be an effective way to help surgeons reduce inadvertent injuries during robot-assisted operative procedures.

“One of the challenges with robotic fingers is ensuring that they can be controlled precisely enough to softly land on biological tissue,” said Hangue Park, assistant professor in the Department of Electrical and Computer Engineering. “With our design, surgeons will be able to get an intuitive sense of how far their robotic fingers are from contact, information they can then use to touch fragile structures with just the right amount of force.”

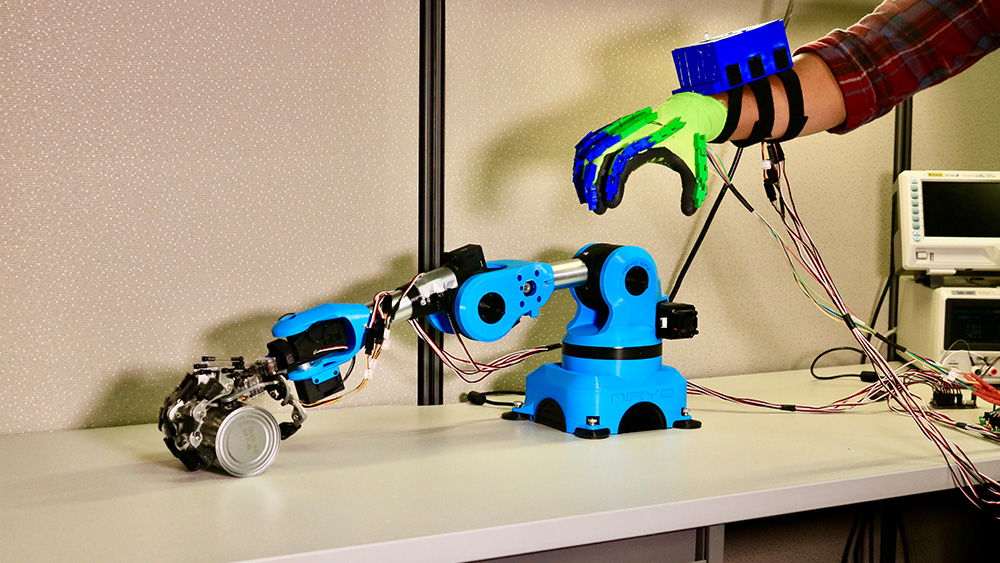

Robot-assisted surgical systems, also known as telerobotic surgical systems, are physical extensions of a surgeon. By controlling robotic fingers with movements of their own fingers, surgeons can perform intricate procedures remotely, thus expanding the number of patients that they can provide medical attention. Also, the tiny size of the robotic fingers means that surgeries are possible with much smaller incisions since surgeons need not make large cuts to accommodate their hands into the patient’s body for operations.

To move their robotic fingers precisely, surgeons rely on live streaming of visual information from cameras fitted on telerobotic arms. Thus, they look into monitors to match their finger movements with those of the telerobotic fingers. In this way, they know where their robotic fingers are in space and how close these fingers are to each other.

However, Park noted that just visual information is not enough to guide fine finger movements, which is very critical when the fingers are in the close vicinity of the brain or other delicate tissue.

“Surgeons can only know how far apart their actual fingers are from each other indirectly, that is, by looking at where their robotic fingers are relative to each other on a monitor,” said Park. “This roundabout view diminishes their sense of how far apart their actual fingers are from each other, which then affects how they control their robotic fingers.”

To address this problem, Park and his team came up with an alternate way to deliver distance information that is independent of visual feedback. By passing different frequencies of electrical currents onto fingertips via gloves fitted with stimulation probes, the researchers were able to train users to associate the frequency of current pulses with distance, that is, increasing current frequencies indicated the closing distance from a test object. They then compared if users receiving current stimulation along with visual information about closing distance on their monitors did better at estimating proximity than those who received visual information alone.

Park and his team also tailored their technology according to the user’s sensitivity to electrical current frequencies. In other words, if a user was sensitive to a wider range of current frequencies, the distance information was delivered with smaller steps of increasing currents to maximize the accuracy of proximity estimation.